-

Beta Was this translation helpful? Give feedback.

Replies: 2 comments 2 replies

-

|

Thank you for your interest. This deserves it's own page in the docs. I'll try to answer in a few hours when I'm on my computer. Would you like to describe your deployment environment (VDS, serverless, edge etc.) in the mean time? |

Beta Was this translation helpful? Give feedback.

-

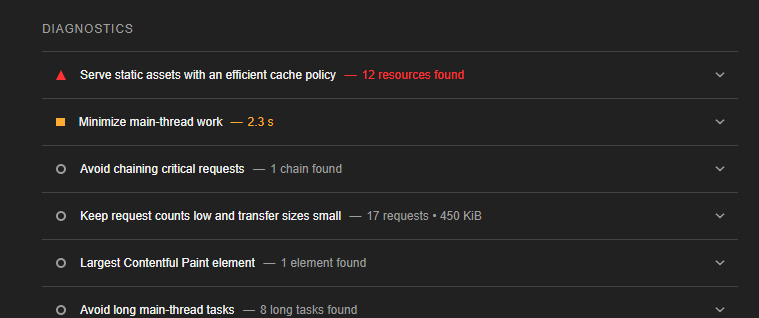

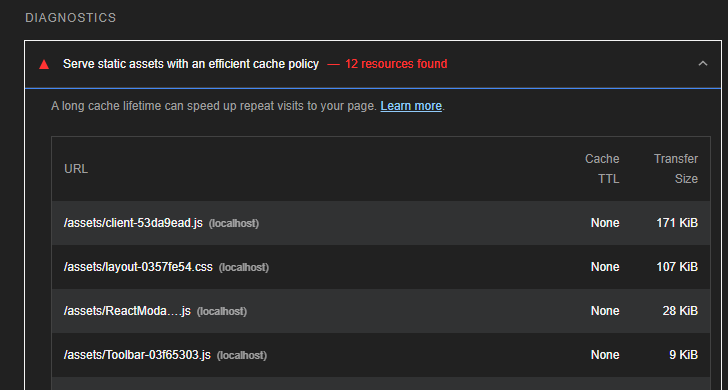

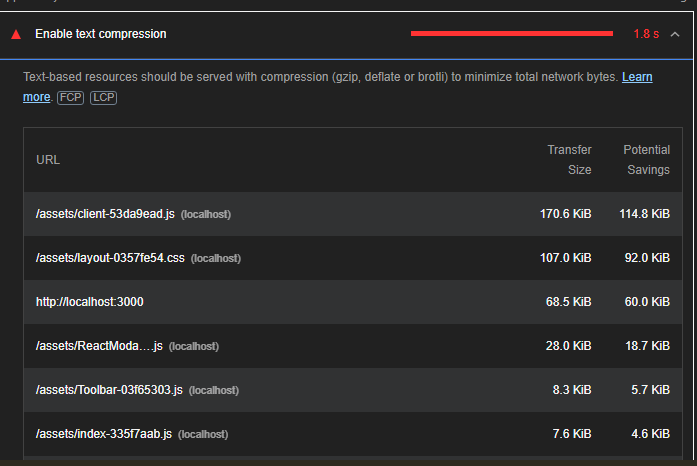

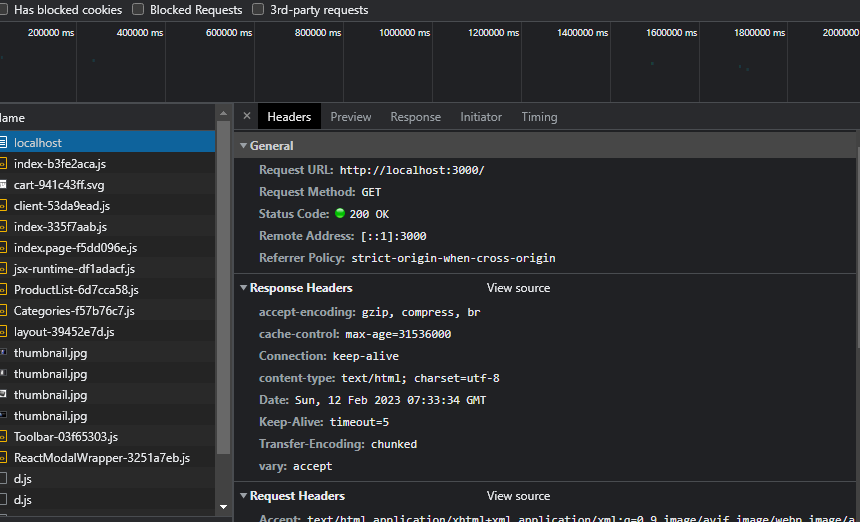

Caveat emptorNode can handle TLS termination (https), HTTP/2, and compression. But before diving in, I think all these should be handled outside of Node by a reverse proxy like Nginx because they can handle all of these things more efficiently. So I would recommend you to leave the generated server alone and focus on optimizing your Nginx/Apache/whatever settings. I understand the urge to measure performance locally but I don't think the results are representative of the real world use in any way. Optimizing static assetsBehind the scenes, Rakkas uses vavite to build a Node server for your application. In particular, it uses the @vavite/connect package. And CachingLighthouse is complaining about the cache headers of your static assets, not your pages. The Rakkas generates three types of static assets. They all end up in The first type (assets copied from the The second type of assets always end up in the The third type should not be cached too aggressively because they probably change on every deploy. Rakkas allows you to set The following

import { createMiddleware } from "rakkasjs/node-adapter";

import hattipHandler from "./entry-hattip";

import type { Options } from "sirv";

export default createMiddleware(hattipHandler);

export const sirvOptions: Options = {

// E-tag is a good strategy for most files, including prerendered HTML

etag: true,

// For the files in assets dir, we can go wild, we can even specify immutable

setHeaders(res, pathname) {

if (pathname.startsWith("/assets/")) {

res.setHeader("Cache-Control", "public, max-age=31536000, immutable");

}

},

};Enabling compressionLike I said before, I believe we should leave compression to Nginx or similar. But you can use vite-plugin-compression to precompress static assets during build and configure

import { defineConfig } from "vite";

import rakkas from "rakkasjs/vite-plugin";

import tsconfigPaths from "vite-tsconfig-paths";

import compression from "vite-plugin-compression";

export default defineConfig((env) => ({

plugins: [

tsconfigPaths(),

rakkas(),

// This plugin is only needed for the client build

!env.ssrBuild && compression({ algorithm: "gzip" }),

!env.ssrBuild && compression({ algorithm: "brotliCompress" }),

],

}));Then you should update your // ...

export const sirvOptions: Options = {

+ gzip: true,

+ brotli: true,

// E-tag is a good strategy for most files, including prerendered HTML

etag: true,

// For the files in assets dir, we can go even specify immutable

setHeaders(res, pathname) {

if (pathname.startsWith("/assets/")) {

res.setHeader("Cache-Control", "public, max-age=31536000, immutable");

}

},

};This doesn't precompress prerendered HTML files. We may add that as a feature but it's not a priority, because, like I said, I believe compression should be handled by the reverse proxy. These changes should bring you closer to 100% score in your local environment. You can also add cache headers for your pages but be very careful adding aggressive cache control headers. Pages tend to be prone to change due to their very nature. |

Beta Was this translation helpful? Give feedback.

Caveat emptor

Node can handle TLS termination (https), HTTP/2, and compression. But before diving in, I think all these should be handled outside of Node by a reverse proxy like Nginx because they can handle all of these things more efficiently. So I would recommend you to leave the generated server alone and focus on optimizing your Nginx/Apache/whatever settings. I understand the urge to measure performance locally but I don't think the results are representative of the real world use in any way.

Optimizing static assets

Behind the scenes, Rakkas uses vavite to build a Node server for your application. In particular, it uses the @vavite/connect package. And

@vavite/connect, in turn, uses …