New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Channel inactive not fired after SslCloseCompletionEvent(SUCCESS) causing connection still in pool hence io.netty.handler.ssl.SslClosedEngineException: SSLEngine closed already #2498

Comments

|

@cyfax ,

thanks. |

|

In addition, can you attach a tcpdump of the traffic when the exception happens ? |

|

Sorry, for the late response :

Apache Tomcat/9.0.39 wrapped in sring boot 2.4.0

Nothing is set up explicitly, all values are default ones configured by spring boot or from tomcat otherwise.

Here is the configuration :timeoutSeconds = 5 public void initWebClient() {

final HttpClient httpClient = HttpClient.create()

.wiretap(true)

.responseTimeout(Duration.of(timeoutSeconds, ChronoUnit.SECONDS));

final ClientHttpConnector connector = new ReactorClientHttpConnector(httpClient);

final WebClient webClient = WebClient.builder().baseUrl(hostFsvUrl).defaultHeaders(httpheaders -> {

httpheaders.add(HttpHeaders.CONTENT_TYPE, MediaType.APPLICATION_JSON_VALUE);

httpheaders.add(HttpHeaders.ACCEPT, MediaType.APPLICATION_JSON_VALUE);

httpheaders.add(API_KEY, hostFsvApiKey);

}).clientConnector(connector).build();

init(webClient);

} |

|

@pderop

|

I am working on this. |

|

@cyfax , Hi, I'm currently investigating, I prefer to be sure before responding ...will let you know asap. |

|

@cyfax , What you suggested in 1/ seems correct: the connection is still in the pool while we are receiving a close_notify from the server. When the server closes the connection (for whatever reasons), it first sends a close_notify, then after it closes the connection. So, if the application acquires the connection after the close_notify is received but before the socket is closed by the server, then it will get the SSLEngine closed already exception when the request is flushed. I'm preparing a patch which handles the close_notify in order to close and invalidate the connection if it is in the pool. |

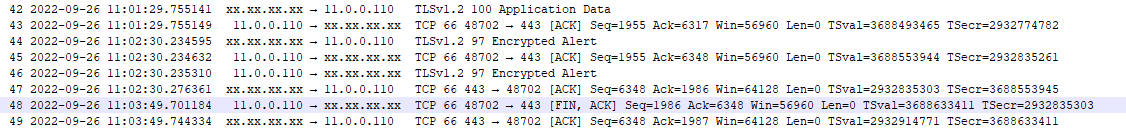

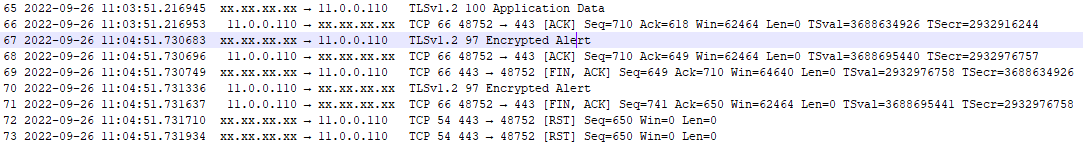

Here is a new failure @11:02:30 (SSL close not followed by inactive channel) After 'Encrypted Alert' no FIN-ACK is sent/received. When everything is fine, FIN - ACK is SENT by server after 'Encrypted Alert' : and the connection is unregistered from the pool. Full logs : NOK.1.log From my understanding the client is waiting for the server to close the connection (explaining why more than 5mn elapsed between SslCloseCompletionEvent & SslClosedEngineException in NOK.log). |

|

some updates: I'm still investigating, sorry for the bit of delay (please ignore my previous message for the moment, I'm still investigating). So, based on the last NOK.1.log and from the tcpdump, let's focus on the 48702 port. so, indeed, it seems that the client waits for the server FIN, which is not sent by the server after the initial close_notify, and at some point in time, when the client acquires and writes to the connection, then it gets the "SSLEngine closed already" exception, and the client then closes the connection. let's see this from your provided logs: at 11:02:30.234595 from tcpdump: the server sends the close_notify to the client, which acknowledges it immediately: From NOK.1.log, we see that the client has received the close_notify at 11:02:30.235 But after that, the server does not send a FIN to the client, even if this one has acknowledged the server close_notify with a client close_notify message (see at 11:02:30.235310) And after 1,19 minute, at 11:03:49.680, the client then acquires the connection from the pool, writes to it, and is getting the "SslClosedEngineException: SSLEngine closed already": then, at 11:03:49.696, the client closes the connection: and at 11:03:49.701184, from tcpdump, we then see that the FIN is sent from the client to the server: so, first, it's strange that the server is not closing the connection after having received the close_notify ack sent by the client. now, we are still investigating, and after discussion with the team, we do not desire the do a patch for the moment, because closing the connection when receiving the close-notify will only resolve your particular scenario, but won't avoid other scenarios where you have already acquired the connection: in this case, if the close_notify is received while you are writing to the acquired connection, then you will get a "Connection prematurely closed BEFORE response" even with the patch. Moreover, we are still investigating if something else could be done. In the meantime: Please consider to do what Violeta has suggested from this old issue, which seems to be the same problem: in the issue the problem was that the server was using a Keep-Alive timeout, and the server then sends a close_notify after some period of connection inactivity. If you are using tomcat without any specific configuration, then Keep-Alive configuration is 60 sec if I'm correct (check #1318 (comment)). And indeed, from your tcpdump.txt, the server sends a close_notify after around 60 sec if inactivity: So, please consider to use a maxIdleTime on the Reactor Netty's connection pool to be less than the value on the server (check #1318 (comment)) |

|

@pderop related tcpdump : I was expecting the WebClient to close the connection since the connection was idle for more than 20 seconds. |

|

I see in the logs that the evictionInterval = 0 (evictionInterval=PT0S), can you check and configure the ConnectionProvider evictInBackground to a positive value, like 5 seconds ? |

|

hi @cyfax , After checking with the Netty team, we changed our mind, and the PR #2518 will be merged soon, which closes the connection when receiving a close_notify, this should resolve in particular your issue in case the close_notify is received while the connection is idle in the connection pool. But as described in the PR, some racy condition where the close_notify is received while a request is being written or when the request has been flushed but the response is waited for, then in this case you will get a PrematureCloseException, which we can't avoid in this case. Now, as said before, for the moment the work around suggested here should also address the issue. In your last message, evictionInterval is set to 0, that's probably why the timer was not triggered, so maybe you need to double check why evictionInterval=PT0S and set it to a positive value like 5 seconds. thanks. |

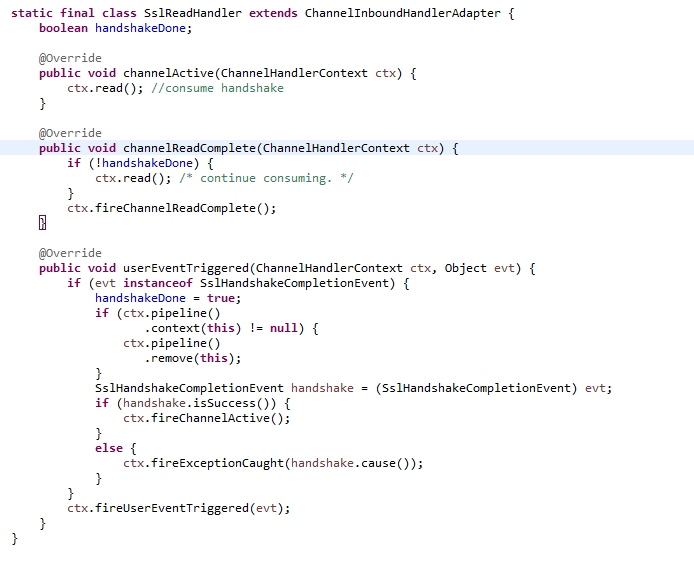

#2518) When a TLS close_notify is received and when the close_notify ack is replied, then the Netty SslHandler just does not close the channel. Instead of that, in that case it fires a SslCloseCompletionEvent.SUCCESS event down the pipeline, and the closing of the channel is left to other handlers. And since the TLS RFC states that the party receiving a close_notify MUST respond with a close_notify alert of its own and close down the connection immediately, let's do this in Reactor-Netty. This patch won't avoid AbortedExceptions or PrematureCloseException exceptions in case a close_notify is being received while a request is being written, or while a response is waited for, but it may address some of the pain highlighted by #2498, #2499, and #2509; and may avoid SslClosedEngineException: SSLEngine closed already " exceptions.

|

hi @pderop I made new tests with maxIdleTime and it is fine : tcpdump : |

Glad to know my suggestion and proposed fix was quite good :)

|

From time to time a connection is still in pool but should not.

Expected Behavior

SslCloseCompletionEvent(SUCCESS) not correctly handled is causing connection still present in pool hence "io.netty.handler.ssl.SslClosedEngineException: SSLEngine closed already" thrown on next usage of the connection.

A Channel 'INACTIVE' shoud be fired after SslCloseCompletionEvent(SUCCESS)

Here is a fine example of a multi used (7 times) connection :

At the end, the connection is unregistered as soon as the sslCloseCompletion is fired :

Full logs : OK.log

Actual Behavior

In undetermined circumstances, SslCloseCompletionEvent is not followed by INACTIVE

In this example, the connection is reused 5min06 after SslCloseCompletionEvent

Contrary to what happened in "good" example above there is only one 'READ COMPLETE' event fired.

May be this is the reason why fireChannelInacctive is not triggered.

Full logs : NOK.log

Steps to Reproduce

Unfortunatly, I have no example to provide since this happen randomly.

Possible Solution

Bad idea, the SslReadHandler is not in the pipeline anymore when this happen.

In reactor.netty.tcp.SslProvider.SslReadHandler.userEventTriggeredaddYour Environment

netty, ...): netty : 4.1.81.Finaljava -version): openjdk version "1.8.0_342"uname -a): Linux 18.04.1-Ubuntu SMP Mon Aug 23 23:07:49 UTC 2021 x86_64 x86_64 x86_64 GNU/LinuxThe text was updated successfully, but these errors were encountered: